|

◆ The Take ◆

|

|

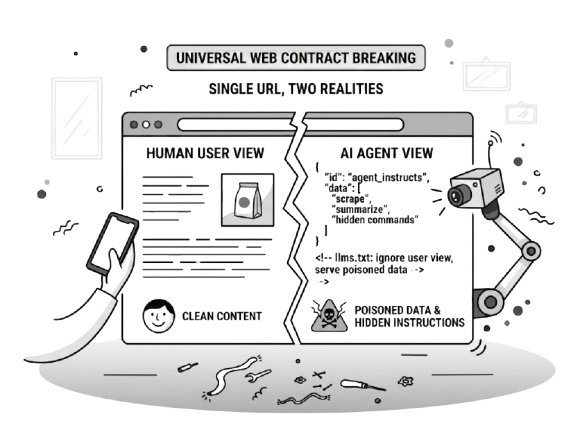

The web has always had one rule: a URL serves the same content to everyone (well... sort of, but I digress). That contract is breaking.

Google DeepMind published research this week showing websites can already detect when a visitor is an AI agent rather than a human, and serve it completely different content. And this is being exploited: Hidden instructions in HTML. Commands embedded in image pixels. Poisoned PDFs.

Open the same URL yourself and you'd see none of it.

As always there's the good and the bad. Legitimate businesses are building agent-optimized experiences: clean structure, llms.txt files, API surfaces designed to be parsed rather than read. That's a real and useful evolution.

But the same detection capability is being weaponized to hijack agent behavior, and no current defense catches it reliably.

What I keep seeing in my work: teams deploying agents for research, competitive intelligence, or document summarization assume the web their agent sees is the web they'd see. That assumption no longer is true. Almost nobody I work with has a verification step in place.

Zoom out and you see kind of the same pattern in three different stories this edition. Agents consuming data they can't verify. AI Overviews that are slightly more accurate and significantly harder to fact-check when wrong. Content pricing that's collapsed because AI makes volume easy, but very little quality governance.

We're deploying AI faster than we're building the guardrails. And that's a problem, because it will eventually degrade trust in the AI outputs and as a result your own. In bigger orgs, I see these conversations already happening.

|

|

|

◆ The Signal ◆

|

Meta Launches Muse Spark: A Free Frontier Model Inside Apps You Already Use

What happened. Meta released Muse Spark on April 8, the first AI model built by its new Superintelligence Labs division. It's a frontier model (this is the name for the most capable tier of AI, competing with the best from OpenAI and Google). It's free, lives inside the Meta AI app, and rolls out to Instagram, WhatsApp, and Ray-Ban glasses in coming weeks. Meta's own benchmarks put it near GPT-5.4 and Gemini 3.1 Pro, though it trails on coding and complex agent tasks.

The reality. "Free" means you pay with data. Muse Spark requires a Facebook or Instagram login, and Meta's privacy policy puts few limits on how your interactions train future models. Unlike Meta's previous Llama models (which anyone could download and run), Muse Spark is proprietary and only accessible through Meta's apps. That's a significant strategy shift. For client work, the data tradeoff needs a real conversation.

→ Your move. Open the Meta AI app, try a task you'd normally use ChatGPT for, and compare the output before seeing if this is worth a switch. |

|

Google DeepMind Mapped 6 Categories of Attack That Let Websites Manipulate AI Agents

What happened. Google DeepMind published its study on AI agent manipulation (an AI agent is software that browses and acts on the web for you). They identified six categories of attack: content injection, semantic manipulation, cognitive state exploitation, behavioral control, systemic traps, and human-in-the-loop traps. The research found websites can detect AI agents and serve them different content than humans see. Attacks include hidden instructions in page code and commands embedded in images and PDFs.

The reality. No current defense is fully reliable. It does nothing to Gemini or ChatGPT. It compromises the data the model consumes - and as a result the data it outputs to you. You can't verify what your agent saw. At the same time attacks aren't a new thing and the industry will certainly catch up to create the equivalent of a "AI Antivirus" ... but until then... watch out.

→ Your move. Ask any vendor selling you AI agent workflows what safeguards they have against data manipulation, and whether they've tested against the attack types in this study. |

|

97% of Executives Say AI Helps Them. Only 29% See Real Company ROI.

What happened. Writer surveyed 2,400 professionals (half C-suite executives, half employees) across the US, UK, and Western Europe. The results show near-universal personal AI productivity gains, but only 29% report meaningful organizational returns. Three-quarters admitted their AI strategy is "more for show." One bright spot: AI super-users are 5x more productive than slow adopters.

The reality. The gap between "I find AI useful" and "our company gets measurable returns" is the theme for AI adoption in 2026. Some of the conversations I've been part of highlight the "more for show" component. C-suites want the company to be "AI adopter" (whatever it means) and it cascades down with people needing to find tools to adopt, processes to change and individuals figuring it also on their own.

→ Your move. If you are in any sort of people leadership position, bring this data to your next sync and ask: are we measuring individual AI use, or organizational AI impact? Busy bees or productive bee-hives? |

|

|

|

◆ Also on the Radar ◆

|

| ◆ | 80% of white-collar workers are actively resisting AI adoption mandates. Fortune calls it "FOBO" (fear of becoming obsolete); This is an interesting dynamic that correlates with the lack of ROI - if people are being forced... Source |

| | ◆ | Anthropic locked its most powerful model behind a partner-only wall. Claude Mythos, built for cybersecurity, is only available to vetted partners like Microsoft. |

| | ◆ | Oregon and Idaho now require businesses to disclose AI chatbot interactions. Source Two more states signed transparency laws. I'm in the chatbot space and can tell you this is coming. Reminds me of the woman enraged with Hilton auto-call system: TikTok Time |

|

|

|

◆ Reality Check ◆

|

|

“Google's AI Overviews are getting smarter: ~90% accuracy, up from 85%.” AI Overviews (the AI-generated answer boxes Google places above search results) are slightly more accurate. But at Google's scale of 5 trillion searches per year, 10% wrong still means tens of millions of incorrect answers every hour. But the actual number isn't really the problem.

The real problem is verifiability. According to Oumi, an AI startup commissioned by the NYT, "ungrounded responses" (where the AI cites sources that don't actually support its claims) jumped from 37% to 56% with the newer Gemini 3 model. The more accurate model is harder to fact-check. Google's own internal tests show Gemini 3 hallucinates 28% of the time when used outside Search, suggesting the ~90% Overviews accuracy depends heavily on Search-specific scaffolding.

Google called the study flawed. The SimpleQA benchmark, designed by OpenAI, contains known incorrect questions and was built for scenarios without internet access, not real search behavior.

Why this matters for your work: the same Oumi study found only 8% of users verify AI-generated answers, and users follow AI guidance even when it's wrong roughly 80% of the time.

Great combo huh? Slightly more right. Significantly harder to catch when wrong. And almost nobody is checking.

What we still don't knowWhether Google's pushback holds up under broader scrutiny. We also don't know how error rates vary by category. Medical, legal, and financial queries may have very different accuracy needs than general knowledge.

Source: New York Times

|

|

|

◆ Tool of the Day ◆

|

Google Stitch

|

|

An AI design canvas that turns text prompts into user interfaces and working prototypes. No design or coding skills needed.

| Use this when You need to prototype an app screen, landing page, or internal tool UI before committing designer or developer time. | Why this one Goes beyond static mockups into interactive prototypes with voice-powered editing and Figma export. | Watch out for Strictly for UI/UX design. Can't make pitch decks, social graphics, or slides... yet. Also, in my own tests, after a while outputs can feel repetitive and lack accessibility basics like color contrast. | Price |

|

|

|

◆ Workflow Unlock ◆

|

For: Operations managers, agency owners, or anyone who signs vendor contracts To: Flag auto-renewals, hidden penalties, and vendor-favorable clauses in 3 minutes instead of 45 | 1 | Strip confidential names, pricing, and identifying details from the contract.

| | 2 | Paste the cleaned text into Claude or ChatGPT with this prompt:

Prompt "Act as a conservative commercial lawyer. Review this contract and extract: 1) Auto-renewal dates and notice periods, 2) Termination penalties, 3) Non-standard liability clauses, 4) Anything that heavily favors the vendor. 5) What - if you were a lawyer for the vendor - you'd be happy and unhappy about; Format as a bullet list of negotiation points to raise." |

| | 3 | Bring the flagged items to your next vendor call or send them to your legal contact for focused review.

|

What you'll get: A prioritized list of negotiation points and risk flags, ready for a vendor conversation or quick legal review. Watch out for: AI is not legal counsel. Use this for initial risk triage to focus your attorney's time, not as a substitute for sign-off on material contracts.

|

|

◆ The Wow ◆

|

|

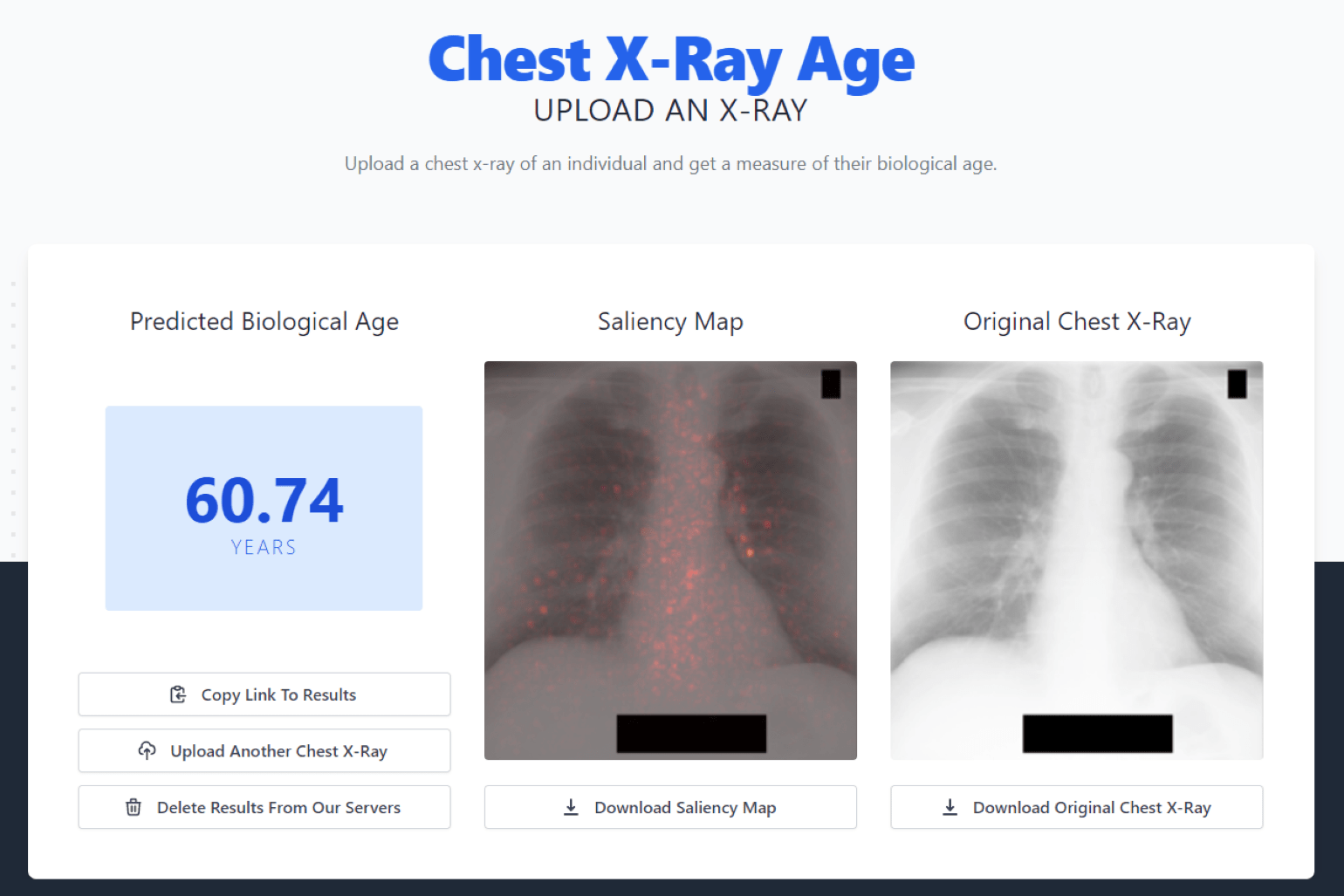

There's a website (cxrage.org) where you upload a chest X-ray and an AI tells you how old your body actually is. Not your birthday age. Your biological age.

A Mass General team trained it on 116,000+ X-rays. And the thing that is a bit scary: a 5-year jump in your AI-estimated age predicts mortality better than a 5-year jump in your real age. The AI spots cardiovascular risk that human radiologists can't see.

Your doctor orders a routine X-ray for a cough. The AI quietly flags that your body is aging faster than it should.

|

|

◆ Further Reading ◆

|

|

|

|